A client came to me with a strange problem.

“I’ve had three people tell me my cover letter sounds AI-generated,” she said. “I didn’t use AI at all. I wrote every word myself.”

If you are asking, “Why do recruiters think I used AI?” the standard answer is: your writing sounds too polished, too generic, or too similar to what AI tools produce. That answer is not wrong, but it is incomplete. The deeper problem is structural, and it is affecting far more candidates than most people realise. This article explains what is actually happening in 2026 and how you can fix it.

I read my client’s letter. She was telling the truth. But I understood why recruiters suspected otherwise.

It was flawless. Professional. Well-structured. And utterly indistinguishable from the hundreds of other cover letters recruiters likely saw that week.

The problem was that it was generic, which made it appear AI-generated.

Another problem is that AI not only writes the same way for everyone, but also trains people to write in its style.

Now, human-written work gets rejected for sounding too perfect. A phenomenon which is happening to more candidates than most people realise.

Key Takeaways (TLDR): Why Do Recruiters Think I Used AI?

- A trust crisis is reshaping hiring in 2026. 91% of US hiring managers and 89% in Europe have caught or suspected AI-driven candidate misrepresentation.

- Pattern convergence means that applications trained on the same AI tools now sound identical. Recruiters filter by difference, not polish.

- Only 37% of employers now consider resumes, CVs and credentials reliable indicators of talent.

- The solution is not to write worse. It is to write more specifically, with details that only someone who did the work could supply.

- Generic statements are indistinguishable from AI output. Specific details like tools used, timeframes, locations, and setbacks acknowledged are not.

- Employers are shifting toward behavioural interviews, skills assessments, and process documentation because these are harder to fake with AI.

- The authenticity premium is not about rejecting AI tools. It is about using them to enhance your story, not replace your voice.

The Pattern Convergence Problem

Here is what is happening in 2026.

Everyone is learning “best practices” from the same AI tools. ChatGPT teaches one way to structure a cover letter. Resume.io suggests one way to phrase achievements. LinkedIn optimisation guides recommend the same keywords.

The result: pattern convergence, hundreds of applications that all sound identical.

When recruiters ask themselves, “Why do my applicants all sound like they used AI?” the honest answer is that many of them did, and AI tools inadvertently trained the ones who didn’t to write the same way.

Recent research from Willo’s 2026 Hiring Trends Report found that just 37% of employers now consider resumes/CVs and credentials reliable indicators of talent.

Even more striking: 41% of employers are actively moving away from resume and CV-first hiring altogether.

Why? Because polish no longer proves capability.

Recruiters reject letters that sound generic, robotic, or template-driven.

The detector is often a tired human who has read 1,000 similar applications.

When every application hits the same standard of “perfect,” recruiters filter by difference, not polish.

The Trust Crisis Making This Worse

A November 2025 survey by Greenhouse found that 91% of US hiring managers and 89% in Europe have caught or suspected AI-driven candidate misrepresentation this year.

This trend is still accelerating, and it will likely get worse before it gets better.

Thirty-two per cent of hiring managers reported candidates using AI scripts during interviews.

Thirty-two per cent reported encountering fake voices or backgrounds during video calls.

Eighteen per cent encountered deepfakes. [Source: Greenhouse, November 2025, pg. 11]

Here is the paradox this creates. Write too well, and recruiters suspect AI.

The solution is not writing worse. Instead, it is writing more specifically, which is what I call the authenticity premium.

For years, I (like most career coaches) have taught clients to be specific in competency-based interviews using the STAR technique (Situation, Task, Action, Result). Be detailed. Include metrics. Show your work.

That advice has not changed. What has changed is the context.

In 2026, specificity is not just a good interview and application technique. It is a necessity. It is proof that you are human.

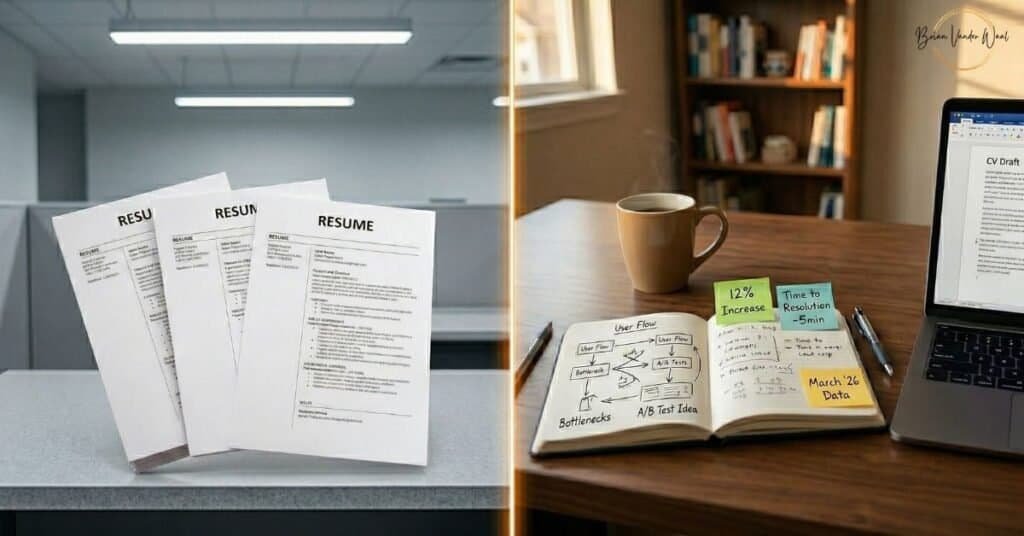

What Makes a Resume or Cover Letter Sound Like AI to Hiring Managers?

Hiring Managers think you used AI on your resume or cover letter, because your writing has no friction, no specificity, no evidence that the work you are describing actually happened to a real person in a real place at a real time. As a result, your resume or cover letter sound like AI to Hiring Managers.

Common phrases that trigger AI suspicion in job applications include:

- “I improved team efficiency through process optimisation.”

- “Successfully managed cross-functional stakeholder relationships.”

- “Demonstrated strong leadership in challenging situations.”

Anyone, or any AI, could write these generic statements. These sentences are grammatically perfect, professionally phrased and completely useless.

Only someone who did the work can write statements this specific:

- “I redesigned our inventory system in Excel using VLOOKUP and conditional formatting, cutting stock checks from 4 hours to 45 minutes.”

- “When the supplier delayed our shipment by three weeks, I renegotiated terms with two backup vendors in Leicester and kept production on schedule.”

- “First attempt at remote onboarding failed because half the team couldn’t access our Salesforce instance. I rebuilt the process from scratch with IT over a weekend.”

The difference? Small, unglamorous, and sometimes messy details that prove you were there. Some examples include:

- Tools used.

- Timescales mentioned.

- Locations named.

- Setbacks acknowledged.

- Problems solved messily, not perfectly.

AI can generate the first set of statements. It cannot generate the second as easily, because those details did not exist until you provided them.

Real Examples from My Practice

Client A: The Cover Letter That Sounded Too Good

Una (name changed) came to me after being told her cover letter “sounded AI-generated” three times, even though she wrote it entirely herself.

The issue was that it was grammatically perfect and well-structured, yet completely generic.

Her original opening: “I am excited to apply for the Operations Manager position. With my proven track record in process improvement and team leadership, I believe I would be an excellent fit for your organisation.”

We rewrote it: “When I joined XYZ Company in March 2023, the intake process took 12 days on average. I mapped every step, identified three bottlenecks in our ERP system, and worked with IT to automate approvals. We cut it to 3 days within four months.”

Same information (process improvement, problem-solving), but completely different specificity.

Her application response rate doubled within two weeks.

Client B: The Portfolio That Actually Worked

Sachin (name changed) had a beautifully polished portfolio website. Clean design. Professional case studies. Perfect grammar.

Despite this, he had zero recruiter responses in eight weeks.

We made three changes.

First, we added a “What Didn’t Work” section showing failed approaches. One project started with customer surveys that yielded unusable data. He explained why, how he pivoted to user testing instead, and what he learned.

Second, we documented his process for one project. Not just the final solution, but also the initial approach, the pivot points, and the tools he used (Figma for wireframes, Hotjar for user behaviour, Google Sheets for tracking metrics).

Third, he recorded a three-minute video on his phone explaining his problem-solving approach for one specific challenge.

After these changes, he received his first recruiter call within 3.5 weeks.

The recruiter specifically mentioned the “What Didn’t Work” section. She said, “That’s how I knew it was real. AI doesn’t talk about failure.”

What Is Replacing the Polished Resume and CV

Employers are shifting away from polished resumes because they can no longer verify them.

According to the Willo report and what I am seeing, they are favouring:

1. Behavioural interviews. Structured questions tied to job competencies. They are not asking “Tell me about yourself.” They are asking, “Walk me through the last time a project went off track. What specifically went wrong, and what did you do?”

2. Skills assessments, including short trials, work samples, or paid simulations, where you demonstrate capability directly rather than describing it.

3. Process documentation. Evidence that shows not just what you achieved, but how you think. For example, GitHub commits for developers, design process docs for creatives, and problem-solving walkthroughs for analysts.

The common thread? These cannot be as easily faked with AI because they require you to demonstrate real work, not describe it perfectly.

Your Action Plan: How to Make Your Cover Letter Not Sound Like AI

Prove you did the work.

Signalling is not just about adding credentials. It is about adding credentials that prove you did the work.

In Part 3 of this series, I explained how to make yourself findable to recruiters. But being findable is step one. Being credible enough to contact is step two.

This week:

- Add one unglamorous detail to your resume. Pick your strongest achievement. Add the tool you used, the timeframe, and the specific obstacle. Make it impossible to fake.

- Show your thinking. Document how you solved one problem. Not the polished story, but the messy reality. What did not work first? Where did you pivot?

- Audit for sameness. Read one sentence from your CV, resume, or cover letter. Could ChatGPT have written it? If yes, add specificity until it reads as if ChatGPT could not have written it. Or at least give ChatGPT the stories and statistics to allow it to be more specific about what you have done.

This month:

- Create proof. Produce one work sample that shows the process, not just the results. Ideas include a GitHub commit, a case study with failures included, or a walkthrough video.

- Prepare for the depth of behavioural interviews. Practice explaining your work using STAR with forensic detail.

- Build public evidence. Contribute to open-source projects. Publish writing that shows your thinking. Share and explain your work.

Why This Works

Recruiters are not just using AI detectors. They are also using pattern recognition.

When 500 applications sound identical, they filter by what stands out.

Specific, messy, real details stand out.

When trust is this low (91% suspect misrepresentation), proof beats polish.

When CVs and resumes are losing credibility (only 37% of employers trust them), what you can demonstrate replaces what you can describe.

The authenticity premium is not about rejecting AI tools. It is about using them to enhance your story, not replace your voice.

In 2026, being qualified is not enough. Being findable is not enough. You need to be believably, provably, undeniably you.

You must show work that only you could have done.

Across this series, the pattern is clear. Strong candidates are not failing because they are unqualified. They are failing because hiring systems have changed.

What to Do Next

This article is Part 4 of the series on how hiring works in 2026. If you have not read the earlier parts, here is where each one fits:

- Part 1 explains why AI hiring platforms now rank candidates against each other rather than just against the job description, and introduces the comparison bracket problem.

- Part 2 covers the three strategies for escaping the wrong bracket: Bracket Down, Signal Up, and Bypass Entirely.

- Part 3 explains how LinkedIn Recruiter search works, what filters recruiters use, and what you need to change for recruiters to find you before you even apply.

Want More Like This?

I write about what is changing in hiring: the patterns I see working with real clients, not generic advice. If this was useful, subscribe to my website newsletter and my LinkedIn newsletter so you do not miss any tips or updates.

Sources: